Source code for this post can be found on my GitHub.

It’s great to see technologies evolving over the years. Alea.CUDA has done so in leaps and bounds since the first time I laid eyes on it a couple of years ago. At the time the name seemed unfortunate and hinted at the “aleatic” programming paradigm, meaning that you could not reliably predict the results your code would produce in consequent runs (alea is a Latin dice). It has all changed since.

I listened to an NVIDA Webinar on the subject and immediately wanted to take Alea.CUDA development for a test drive. I wanted to do it the same way I would in Python: just like we use Numba and IPython console, use F# scripting with F# Interactive.

For a test subject, I picked the same 1D LBP described in my post here. The actual algorithm for extracting the local binary pattern and its use in face recognition is described in this paper.

The algorithm consists of two parts:

- Sliding a window of size 2*n across the array and computing the pattern at each location

- Computing the histogram of these values.

Here is my F# way of doing it:

let lbp (arr : int[]) n =

if arr.Length < n * 2 + 1

then failwith ("Length should be at least " + (n * 2 + 1).ToString())

// computes a pattern around an array element i

let calcOnePattern i (around : int [])=

[0..around.Length - 1]

|> Seq.fold (fun st j -> if around.[j] > i then st ||| (1 <<< j) else st) 0

// moves sliding windoe of size '2*n' through the array and computes the pattern for each

// element at the center of a window

let prep =

[|n .. arr.Length - n - 1|]

|> Array.map (fun i -> calcOnePattern arr.[i] (Array.concat [arr.[i - n..i - 1]; arr.[i + 1..i + n]]))

// return histogram

prep

|> Array.fold (fun (st : int[]) v -> st.[v] <- st.[v] + 1; st)

(Array.zeroCreate (1 <<< n * 2))

For comparison, here is the Python code (here p is 2 ** [0..(2n-1)]), in F# code I’m simply shifting left, so no such array is necessary.

def extract_1dlbp_cpu(input, neighborhood, p):

hist = np.zeros(1 << (2 * neighborhood))

for i in range(neighborhood, len(input) - neighborhood):

left = input[i - neighborhood : i]

right = input[i + 1 : i + neighborhood + 1]

both = np.r_[left, right]

hist[np.sum(p [both >= input[i]])] += 1

return hist

Putting it on GPU

I took one of Alea.CUDA provided sample scripts and modified it slightly. This is why my code is wrapped in a class. Here it is:

type LBP(arr : int [], ?n : int) =

inherit GPUModule(GPUModuleTarget.DefaultWorker)

let mutable arr = arr

let n = defaultArg n 4

do

if arr.Length < n * 2 + 1 then failwith ("Length should be at least " + (n * 2 + 1).ToString())

member this.Arr

with get() = arr

and set (value) = arr <- value

[<Kernel;ReflectedDefinition>]

member this.Kernel (arr:deviceptr<int>) (n:int) (hist:deviceptr<int>) (len : int)=

let mutable i = blockIdx.x * blockDim.x + threadIdx.x

let mutable res = 0

if i < len - 2 * n then

i <- i + n

for j = i - n to i - 1 do

if arr.[j] >= arr.[i] then res <- res ||| (1 <<< (j - (i - n)))

for j = i + 1 to i + n do

if arr.[j] >= arr.[i] then res <- res ||| (1 <<< (j - (i - n + 1)))

__atomic_add (hist + res) 1 |> ignore

member this.Compute () =

let blockSize = 512

let gridSize = divup (arr.Length - 2 * n) blockSize

let lp = LaunchParam(gridSize, blockSize)

let hist = Array.zeroCreate (1 <<< n * 2)

use d_arr = this.GPUWorker.Malloc(arr)

use d_hist = this.GPUWorker.Malloc(hist)

this.GPULaunch <@ this.Kernel @> lp d_arr.Ptr n d_hist.Ptr (arr.Length)

d_hist.Gather()

There is not much here, at least in spirit, that any CUDA developer is not used to. We define a kernel and then launch it in a very familiar way, albeit wrapped in a slightly unfamiliar form. Nothing that is not easy to understand or adopt, though. F# quotations are used to interface with nvcc, so makes sense. Pointer arithmetic is wrapped nicely, so code like the one on line 25 above (__atomic_add) is possible. Works great, easy to use.

Here the kernel, as one would expect, computes a pattern around a single array element, at the same time contributing its result to the final histogram. Definitely room for optimization as this atomic_add is an obvious bottleneck, but I didn’t go any further since the results are quite spectacular already.

Also, I really like the “divup” function above, that figures out the number of blocks. Normally it is done as:

gridSize = (total + blockSize) / blockSize

… a small thing, but a nice convenience.

Boilerplate

Since I was doing it in F# Interactive, some boilerplate was necessary. I installed my binaries through NuGet and here are the references. The “send to F# interactive” functionality that has been around for a while now is a great help to figure things out.

#r "System.Configuration.dll" #I @"packages\Alea.Cuda.2.1.2.3274\lib\net40" #I @"packages\NUnit.2.6.3\lib\" #I @"packages\FsUnit.1.3.0.1\Lib\Net40" #r "Alea.CUDA.dll" #r "nunit.framework.dll" #r "FsUnit.NUnit.dll" #load @"packages\FSharp.Charting.0.90.12\FSharp.Charting.fsx" open System open System.IO open Alea.CUDA open Alea.CUDA.Utilities open FsUnit open FSharp.Charting open System.Diagnostics Alea.CUDA.Settings.Instance.Resource.AssemblyPath <- Path.Combine(__SOURCE_DIRECTORY__, @"packages\Alea.Cuda.2.1.2.3274\private") Alea.CUDA.Settings.Instance.Resource.Path <- Path.Combine(__SOURCE_DIRECTORY__, @"release")

The last two lines come straight from Alea tutorial sample and are necessary to hook up the jit compiler. That’s it!

Experiments & results

I used the following functions to drive the experiments:

// random array of length n

let generate n =

let rng = Random(int DateTime.Now.Ticks)

Array.init n (fun _ -> rng.Next())

// experiment: run from 10 ** low to 10 ** high array length

let experiment low high =

if low >= high || low < 0 then failwith "must be: low < high, both non-negative"

// salt it

let arr = [|0..1000|]

use lb = new LBP(arr)

lb.Compute() |> ignore

let sw = Stopwatch()

let cpuTimes = Array.zeroCreate (high - low + 1)

let gpuTimes = Array.zeroCreate (high - low + 1)

for i = low to high do

let range = int (10.0 ** float i)

let arr = generate range

lb.Arr <- arr

// Run on CPU

sw.Restart()

printfn "Legnth: %d" range

printfn "-----------"

let h1 = lbp arr 4

sw.Stop()

let idx = i - low

cpuTimes.[idx] <- range, sw.Elapsed.TotalSeconds

printfn "Computed on CPU: %0.5f sec" (snd cpuTimes.[idx])

//Run on GPU

sw.Restart()

let h2 = lb.Compute()

sw.Stop()

gpuTimes.[idx] <- range, float sw.Elapsed.TotalSeconds

printfn "Computed on GPU: %0.5f sec" (snd gpuTimes.[idx])

printfn ""

// make sure we are ok

should equal h1 h2

Chart.Combine(

[Chart.Line(cpuTimes, Name="CPU")

Chart.Line(gpuTimes, Name="GPU")

]

)

.WithYAxis(Log=true, Title = "sec")

.WithXAxis(Min=10.**float low, Log=true, Title = "length")

.WithLegend(InsideArea=true)

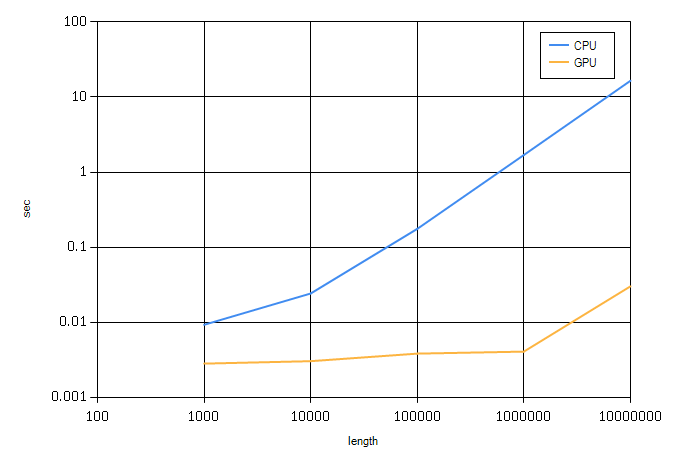

The helper “generate” generates a random integer array of arbitrary length, and the “experiment” function runs the experiments on arrays of sizes 10 ** [low..high]

with

experiment 3 7

I got:

Legnth: 1000

-----------

Computed on CPU: 0.00937 sec

Computed on GPU: 0.00285 sec

Legnth: 10000

-----------

Computed on CPU: 0.02445 sec

Computed on GPU: 0.00308 sec

Legnth: 100000

-----------

Computed on CPU: 0.17697 sec

Computed on GPU: 0.00388 sec

Legnth: 1000000

-----------

Computed on CPU: 1.70085 sec

Computed on GPU: 0.00412 sec

Legnth: 10000000

-----------

Computed on CPU: 16.53772 sec

Computed on GPU: 0.03045 sec

Here the straight F# mode runs about twice as fast as pure Python:

Length: 1000

--------------

Finished on CPU: time: 0.02263s

Length: 10000

--------------

Finished on CPU: time: 0.28701s

Length: 100000

--------------

Finished on CPU: time: 2.88549s

Length: 1000000

--------------

Finished on CPU: time: 29.97346s

The GPU performances are about comparable between Python and Numba and F# with Alea.CUDA. Same card: GTX Titan with 6Gb RAM, 14 SMPs

Of course a mandatory chart produced with FSharpChart and the following small scriptlet:

Chart.Combine(

[Chart.Line(cpuTimes, Name="CPU")

Chart.Line(gpuTimes, Name="GPU")

]

)

.WithYAxis(Log=true, Title = "sec")

.WithXAxis(Min=10.**float low, Log=true, Title = "length")

.WithLegend(InsideArea=true)

(Still not sure, why my X axis starts from 100 and not from 1000, but I guess it’s minor :)).

A great experience doing all this overall: smooth and painless.

One thought on “Fun with Alea.CUDA, F# Interactive, Charts”