This holiday season my brief indulgence was solving the Advent of Code puzzles. One was about the look-and-say sequence. It starts with “1” and grows as follows:

1 is read off as “one 1” or 11.

11 is read off as “two 1s” or 21.

21 is read off as “one 2, then one 1” or 1211.

1211 is read off as “one 1, then one 2, then two 1s” or 111221.

111221 is read off as “three 1s, then two 2s, then one 1” or 312211.

This has been analyzed. Members’ length grows exponentially, so

Advent of Code Day 10 is asking to output the length of the 41st member of this sequence, starting from 1113122113. And then, in the second part – of the 51st. This is simple enough, however, if one is not careful… It may take a while.

I wrote a solution fast enough, however I wasn’t satisfied with it at all: it looked too generic in style: it could be written this way in any language. The whole point of the indulgence being the aesthetic enjoyment of writing F#.

I looked around and found the following post, which I am delighted to quote as an example of the author’s amazing style and command of the language

let read (input : string) =

input

|> Seq.fold (fun acc x ->

match acc with

| (n, x')::tl when x = x' -> (n+1, x')::tl

| _ -> (1, x)::acc) []

|> List.rev

|> Seq.collect (fun (n, x) -> sprintf "%d%c" n x)

|> fun xs -> System.String.Join("", xs)

If you take a moment to read through it you will be admiring it as much as I was.

Here is my boringly generic solution.

let genNext (s : List<byte>) =

let mutable i = 0y

let mutable c = s.[0]

let reps = List<byte>()

for ch in s do

if c <> ch then

reps.Add(byte i)

reps.Add(c)

c <- ch

i <- 0y

i <- i + 1y

reps.Add(byte i)

reps.Add(c)

reps

If you look at mine – it is totally un-F#. It may as well have been written in C++! It is not motivated by a sense of style, or good taste, or sadly, by a deep sense of the language. It is, however, motivated by performance. The key to great performance is, of course, to know well ahead of time where your performance goes. In this case we do know it: since the structure holding the next sequence member grows in length exponentially, we would like to minimize all operations related to its manipulation. As far as reads – we cannot escape O(n) reads. Same for writes at every step. And now we are faced with a dilemma that a C++ (and possibly a C#) programmer would never face: are we being wasteful with our memory. In fact, so wasteful that it can bog us down? The answer in the first case is – yes, of course. In the second – no.

The first solution that would satisfy any functional purist, creates and destroys its immutable structures every step of the way. Not only that, its input is necessarily a string and so we also need to serialize the sequence we come up with into a string for the sole purpose of deconstructing it again in the next iteration.

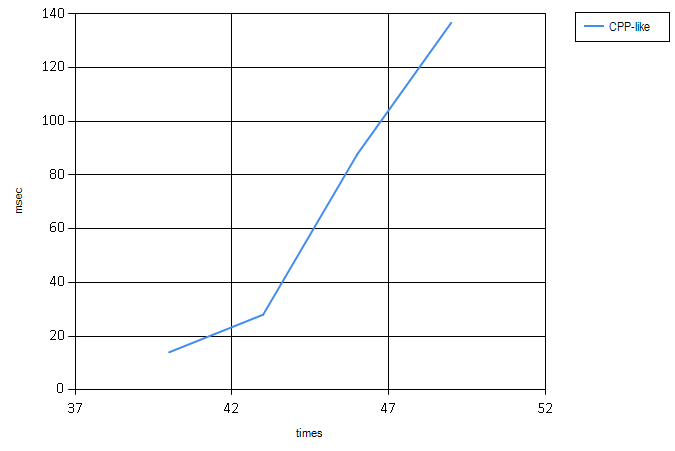

At the same time, the second solution, using only an almost minimal amount of memory (it is provable, that the sequence never contains any numbers beyond 1, 2, and 3, so bytes are good), uses exactly one new structure which it returns. We actually don’t need to serialize it back to a string! Let’s see how these compare time wise (number of iterations over the sequence is along the X axis):

It is not unexpected, still kinda staggering. To zoom into the boring solution (it is still exponential):

So this raises a bunch of questions: as our technology evolves, are we going to be able to write more and more aesthetically impeccable (and shorter!) code without caring much for the real world? Or are performance concerns going to ever be looming somewhere very close, forcing more sensible choices? Who is the developer of the future? Is s/he going to be required to write great code but hold more down-to-earth alternatives in mind, effectively cancelling her immunity to trials and tribulations of machine machine-oriented languages like C++ or even C, something that Java set out to free us from ages ago? I don’t know, but I believe the answer for the nearest future at least, should be sought in the world of algorithms. Algorithmic and especially data structural efficiency would help us write in style while still holding on to great performance gains.

Hi, thanks for the link and love the perf comparison between the solutions!

Regarding writing concise/functional code vs performant/imperative code, I just wanna throw in my two pence here.

There are opportunities in the compiler/library space that can help marry the two often opposing goals – by translating/compiling your functional-style code into more performant imperative-style alternative behind the scene.

A good example is F#’s Streams https://github.com/nessos/Streams library which takes your functional-style pipelines and turns them into imperative-style for/while loops.

Another example is the F# compiler itself, e.g. it will compile recursive functions to while loops where possible, or optimizes pattern matching to switch statements, or optimize Option.None into null, etc.

As for programmers of today and tomorrow, knowing how to express solutions with high-level, abstract code whilst at the same time knowing how to write a more performant alternative in mind will always be advantageous in my view. The trick, of course, is identifying the performance critical sections of your code (Knuth’s 97-3 rule, “we should not pass up opportunities in that critical 3%”) and focus optimization in those areas.

Thankfully we have pretty good tooling in this space already, and Greg Young’s PrivateEye http://www.privateeye.io/ profiler is going to make it even easier for F# programmers.

However, depending on what you’re doing, the most important optimizsation might even happen outside of your code. In my line of work – building data-centric backend services – the most significant factors towards latencies to the users are: 1) network 2) data access 3) inter-service dependency, and lastly, 4) my code.

Since most of us in this space are essentially implementing a data-shipping paradigm of some sort, the biggest wins (for the smallest investment) might be having edge nodes close to your users; improving caching strategy; improving data access time by adopting a different database solution, for instance, even switching to a more efficient network protocol such as protocol-buffer is going to beat any code-level optimizations hands down.

There are also other factors to consider.

For example, besides algorithms and data structures, understanding how your code is executed on the machine (what Martin Thompson describes as “Mechanical Sympathy”) is just important. By aligning your data access pattern to how the cache works, Martin estimates you can easily blur the difference between an O(n^2) and O(n) implementation.

And the hardware architecture is also changing, and as more and more cores are added to the hardware (in fact, I often hear that the only reason why hardware people haven’t added even more cores is because the software evolution hasn’t caught up yet), the way we write software also needs to evolve to take full advantage of this change – and that’s another often-used argument for FP in that it’s better suited for this new world.