Tensorflow C++ Video Detector

It is time to validate all this arduous setup work, run our first C++ detector and reap the first benefits. You may clone this repository, which is a fork of this repository, modified and adapted to the modern times.

Ensuring the Right Build Paths

Note the following excerpt from CMakeLists.txt:

set(MYHOME $ENV{HOME})

# IMPORTANT: Protobuf includes. Depends on the anaconda path

# This is Azure DLVM (not sure if DSVM is the same)

include_directories("/data/anaconda/envs/py36/lib/python3.6/site-packages/tensorflow/include/")

# This is a standard install of Anaconda with p36 environment

include_directories("${MYHOME}/anaconda3/envs/py36/lib/python3.6/site-packages/tensorflow/include/")

The include path we set here is used for imported tensorflow includes, it solves protobuf compilation problems. In theory is the right protobuf is installed, this is not necessary. But I can’t quite get it right, so use this as a band-aid. Definitely something to get fixed right.

OpenCV Mat -> Tensorflow Tensor

In Python we used numpy arrays for storing decoded frames and passing this data to Tensorflow. Here we need to give it a second thought.

Check out this function that bridges between video frames decoded by OpenCV and Tensorflow tensors:

Status readTensorFromMat(const Mat &mat, Tensor &outTensor) {

// Trick from https://github.com/tensorflow/tensorflow/issues/8033

uint8_t *p = outTensor.flat().data();

Mat fakeMat(mat.rows, mat.cols, CV_8UC3, p);

mat.copyTo(fakeMat);

return Status::OK();

}

Apparently tensors and Mats have a compatible structure, so we can just fill the tensor with the right data.

Build and Run

We can now build the app:

cd <app dir> mkdir build cd build cmake .. # cmake -DCMAKE_BUILD_TYPE=Debug .. make

Cloning the repository downloads frozen_inference_graph.pb as well as classes.pbtxt for Inception V2 SSD detector into the demo/ssd_inception_v2 subfolder. A sample video is downloaded into the same folder as well. You can change these values at the top of the main function, or better still, set up command line parameter parsing.

string ROOTDIR = "../"; string LABELS = "demo/ssd_inception_v2/classes.pbtxt"; string GRAPH = "demo/ssd_inception_v2/frozen_inference_graph.pb"; string VIDEO_FILE = "demo/ssd_inception_v2/ride_2.mp4";

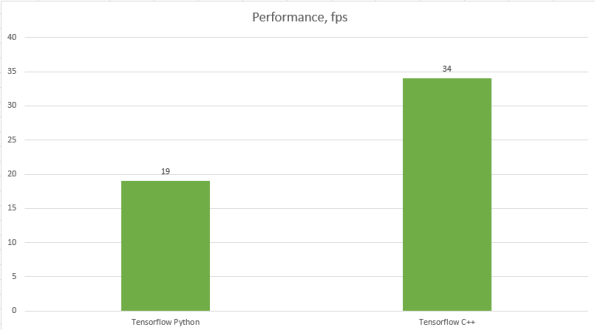

We are now better than real time @ ~34 fps on Titan V. To make this official:

Next Steps

Time to get serious.

While our video decoding is efficient we are doing a few completely unnecessary memory copies: GPU -> System upon decoding and then System -> GPU once we start running it through the Tensorflow graph. Incidentally, in all regular Tensorflow pipelines it is assumed that Tensorflow graph is fed from the system memory.

The next step is to eliminate these moves and learn to feed Tensorflow graph from the GPU.

One thought on “Supercharging Object Detection in Video: First App”